Search Sketchfab or CGTrader for a Ghanaian character and you'll find generic, flattened "African" representations with wrong proportions, wrong skin texture, wrong features. Creatives across Ghana are borrowing foreign assets to tell local stories, and the technical execution is often flawless. But the authenticity is absent.

This project addresses that gap directly. Using photogrammetry and a carefully developed pipeline, I am building a library of digital characters from real Ghanaian subjects, starting with human characters and expanding into objects and architecture.

Seven stages, each with a defined input and output. The same sequence runs on every subject, which is what makes it scalable.

I look for subjects with distinct faces and exaggerated features: strong brow bones, pronounced cheekbones, character-rich skin. These hold up better at lower polygon counts and produce more interesting variations when aged or stylised downstream. Authenticity starts here.

Three height levels around the head, full-figure front, side, and back in A-pose. Close-up detail shots capture pores, wrinkles, and pigment variation that procedural tools cannot replicate later. Every shot is a texture reference as much as it is a geometry reference.

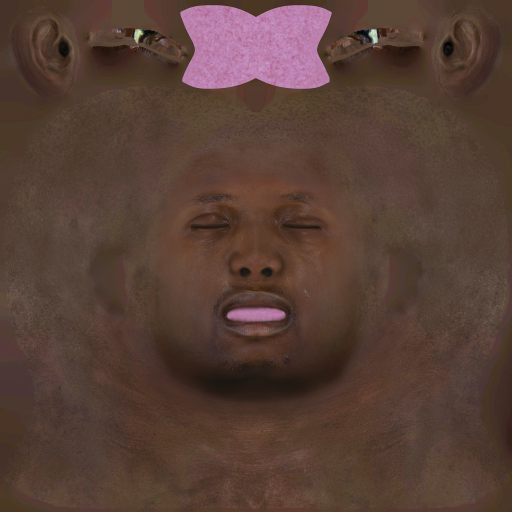

Images go into Metashape. Unusable frames are removed first. Then: point cloud, dense cloud, mesh, textures. The process is largely automated, but I manually clean stray geometry that floats off the surface. The output is a high-poly scan mesh and a raw diffuse texture bake.

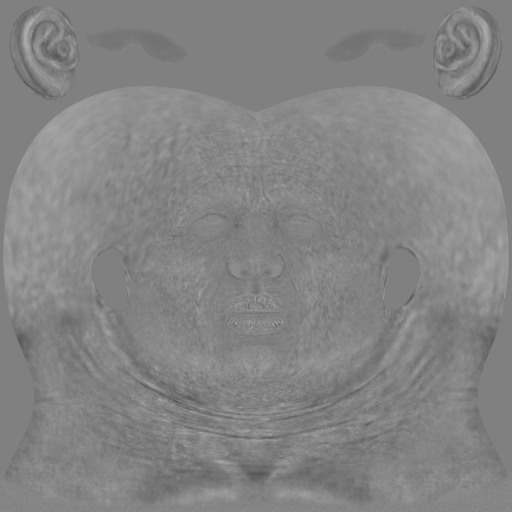

The scan's shape is transferred onto a Genesis 9 base mesh via Wrap3D or FaceGen, then loaded back into DAZ as a morph target. The subject's proportions now live inside a mesh that is already rigged, weighted, and compatible with thousands of existing DAZ assets.

Proportions are refined against the A-pose reference photos, matching the real subject's body ratios. The morph system also allows aging or de-aging the character without rebuilding from scratch. One scan can produce multiple age variations of the same person.

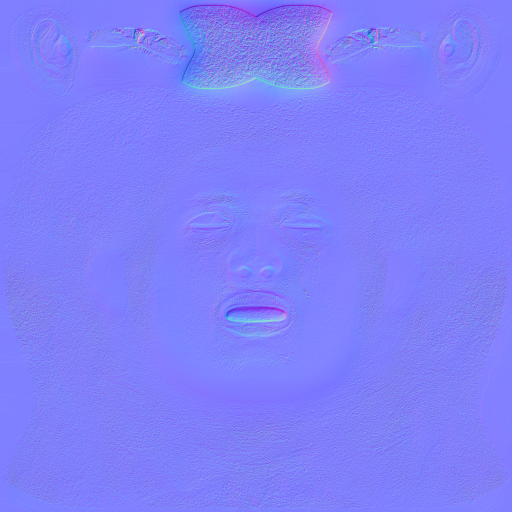

UVs go into Substance Painter. The base is built from the photogrammetry diffuse bake. Skin details including pores, subsurface variation, pigment patches are finished in Photoshop because no procedural generator can replicate how a specific person's skin actually looks. Four maps carry all of it.

Diffuse

Diffuse

Normal

Normal

Roughness

Roughness

Displacement

Displacement

Characters export as FBX, OBJ, or GLTF with all maps packed. Because the base is Genesis 9, they drop cleanly into Blender, Cinema 4D, Maya, and Unreal with no retopology needed. Currently distributed free to students at KNUST, with a planned public release on Sketchfab and CGTrader.

Each character starts from a real Ghanaian subject. Some are rendered faithfully. Others are pushed into stylised territory to show the range the pipeline supports. All are production-ready and export-ready.

The goal at shape transfer is a one-to-one proportion match before texturing begins. Drag the slider to see how close the Genesis 9 morph gets to the real subject's front-view reference photo.

Reference Photo

Reference Photo

Mesh

Mesh

Rotate, zoom, and inspect the topology and textures directly in your browser.

A real-time walkthrough of one complete character going through every stage of the pipeline, from photography session to final export.

These characters are distributed free to students at KNUST. The goal is to put authentic Ghanaian assets in the hands of the next generation of digital artists from day one, so they never have to darken a foreign stock image to tell a local story.

The longer-term plan is a public release on Sketchfab and CGTrader, feeding directly into the broader African digital assets platform project. These characters are its proof of concept.